The Future of Programming (and Why I'm Building a New Language)

Quentin Feuillade--Montixi

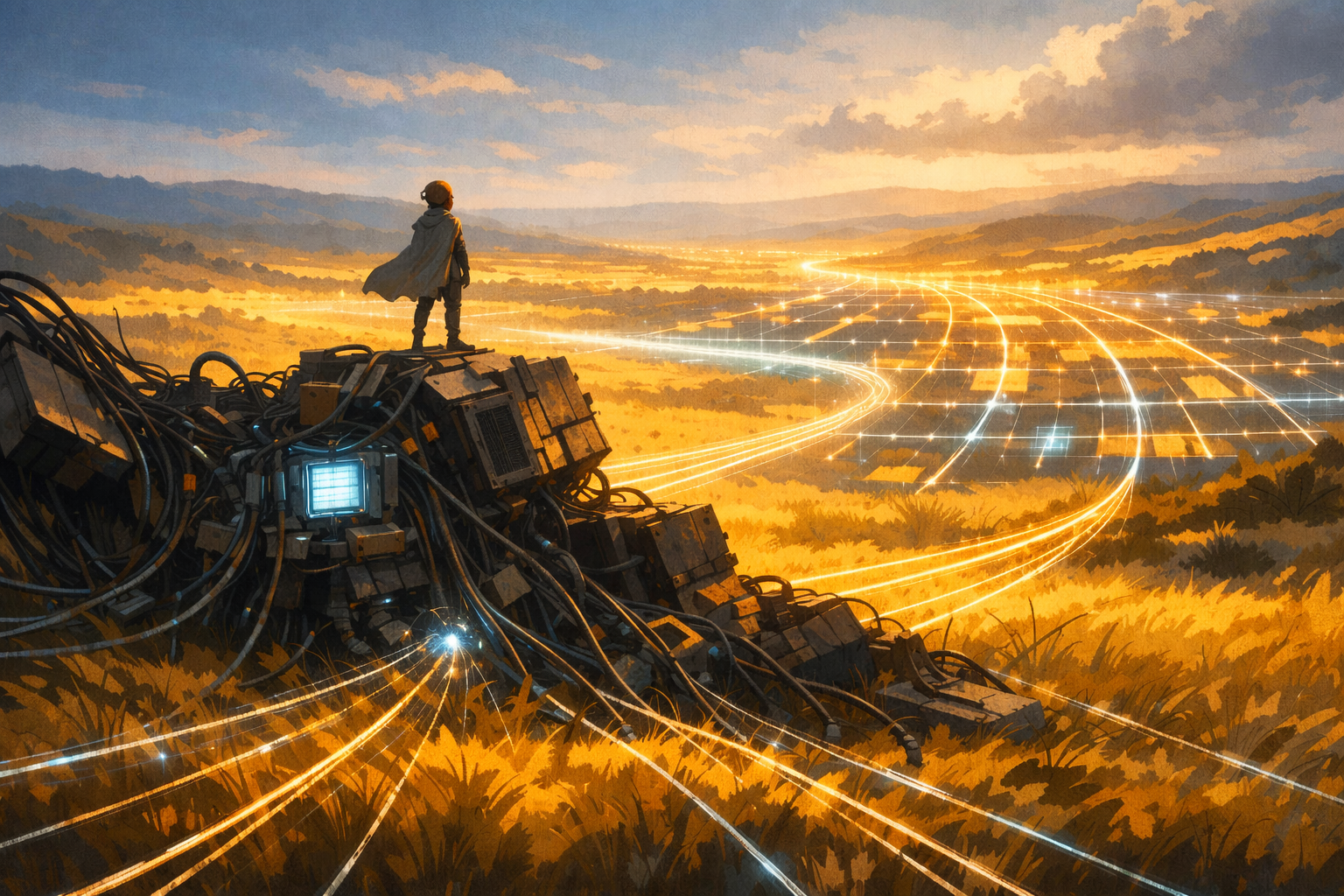

Today I'm open sourcing Weft, a programming language for AI systems.

Github: WeaveMindAI/weft

The short version: Weft is a language where LLMs, humans, infrastructure, and APIs are base ingredients (similar to how numbers and operators are base ingredients in other languages). You wire them together, the compiler checks the architecture, and you get a visual graph of your program automatically. I also created an AI builder (Tangle) that writes Weft in a fraction of the tokens it would waste on Python. The compiler catches mistakes and steer Tangle to build systems that are easier to review, and structurally more robust.

The problem

A lot has changed in the last couple of years, and most people haven't fully processed it yet. The best programs now have intelligence built into their core: an LLM processing unstructured data, a human reviewing the output, calls to APIs. These new systems think, wait, fail in ways traditional function never has, and need context to work properly.

But they are all being built with languages that don't understand any of those concepts. Everything gets treated as "just code and HTTPS calls". So you end up writing the same repetitive, brittle, plumbing code everyone else is writing.

When something goes wrong (and it usually does: hallucinations, low quality or harmful outputs, prompt injections, etc.), the fixes are often simple. Add a check, add a filter, improve the prompt, put a human in the loop. In aviation, they call this the Swiss cheese model: stack enough layers, and the holes stop lining up. I've seen this firsthand; with the right layers, you can make a system really hard to hijack and really powerful. But each layer means more plumbing code, and only a handful of people truly knows how to "improve the prompt". The worst part is that if the layers aren't done correctly, they make your system rigid and lose what makes it an intelligent program in the first place.

After a month, you might have hundreds of thousands of lines of code, the real logic is buried somewhere in a few thousand lines.

And you can't see any of it, neither can anyone else. Most of the code was written by AI, you didn't review it, and you probably couldn't if you tried. When something breaks, you ask another AI to fix it, but that AI didn't write the original code either. So it just adds patches on top of patches. Now you've got duplicated logic, wrong architecture, silently swallowed errors everywhere. No human understands it, and no AI has enough context to see the whole picture. I've watched small teams spend a hundred of thousands of dollards just trying to keep this kind of thing alive.

The language

I'm working on two things to change that. The first is a coordination language.

It sits one level of abstraction above traditional languages. Instead of writing all the plumbing yourself, you connect "nodes" for the things you need: AI calls, databases, email, Slack, human approvals, short pieces of code, scheduled tasks. If something isn't there, you can build your own by customizing features that are pre-maid by the language.

You can also see it as a graph. Click any node to see what goes in and comes out. When something runs, you can watch it flow. When something breaks, you can pinpoint exactly where.

If you've tried graph-based programming before, I know where your head is going. They can quickly turn into a tangled mess. Weft avoids that by making everything foldable. Any group of nodes can collapse into a single node with a description, typed inputs, and typed outputs and groups nest within groups. You can start from the top: five groups for the big picture. Zoom into the one that matters; everything else stays collapsed. When your system grows to a hundred nodes, it still looks like five at the top level. The complexity is organized.

It also works in code: connecting two nodes takes just a few characters. No need to hunt through the graph for the right spot. An AI builder can focus on exactly what it needs while still having a clear view of the whole system with very few tokens.

Because the program has structure, you can reason about it in ways that are impossible with a flat codebase. The compiler catches missing connections or type mismatches before anything runs. And because it's a graph, you can do algorithmic analysis on the architecture: automatically find when user input flows into an AI without a filter or when multiple AI calls are chained without a hallucination check.

Everything is typed and scoped, so you can build one piece at a time. You can tell a group "you're not ready yet, just return this data" and focus somewhere else. The compiler has already checked that the connections are valid and the types match. Test it. If it works, move on. By the time the whole system is done, you already know it works.

The builder

The second thing I am building is Tangle. This is actually where it all started.

I didn't set out to build a language, I wanted to make an AI coding agent for building robust AI-powered systems. Something that could help people build quickly and correctly. But I kept having the same issues: the AI kept doing the wrong pattern and no signal was powerful enough to force the AI away from it's bad habits.

The core bet I made at this point was to stop trying to steer the model, and instead build the language that would enforce good practices. So I built both together. The compiler catches mistakes before the user ever sees them, the builder self-corrects, and the language does the heavy lifting of abstracting complexity away. Almost no tokens get wasted. This is why it is so fast at building production ready system.

I've spent years figuring out how to prompt effectively, where to add safeguards, and how to set context so models perform their best. All of that is going straight into the builder. I could have built on top of an existing AI coding tool, but most of them aren't trying to make models more efficient, they're trying to milk as many tokens out of their users as possible. Shortcuts for humans have existed for decades (copy, paste, rename across a codebase, docstrings). I still can't believe people are shipping AI tamagotchis when these basic features don't even exist for AI yet.

Humans in the loop

I don't think the medium term future is about removing humans from the loop. It's about putting them in the right spots: taste, field experience, judgment calls. The AI handles the engineering, the plumbing, the testing. Ideally, it mocks everything up, tests autonomously, fixes what breaks, and hands you a clean result. You plug in the real services when you're ready. But decisions that need domain expertise and review steps that need human judgment should stay human. Weft makes it easy to keep humans involved through a built in browser extension. I belive that the best systems will be a collaboration between AIs, and people that have field expertise and good taste.

Open source, opinionated

The language is open source because my goal is to get adoption, and I think that you should be able to see how it works, trust it, break it apart, and add your own nodes. Right now everything is standalone because it's just me and it's only been a couple of months. The goal is to integrate within existing tools (vscode first, allowing to deploy on your own infrastructure, etc.). Some parts are built, some are in progress, and some I'll figure out as I go.

Both the builder and the language are opinionated by design. I've built a lot of systems and broken even more. I have a pretty good sense of what works, and I'd rather bake in solid patterns and be wrong about a few things than build something so flexible it ends up useless. The language guide toward the right patterns, and the builder has the knowledge of good AI system engineering. When I miss the mark, I'll adjust. But the default should be good, not neutral.

I've spent years waving my arms at problems. I want to give people the tools to actually build well, and put my experience into the product instead of into another report that nobody reads.

Technical deep dive: DESIGN.md

If any of this resonates, or you think I'm missing something, reach out: quentin@weavemind.ai

Written by

Quentin Feuillade--Montixi

Founder & CEO

3 years evaluating frontier AI systems, including red teaming for OpenAI and Anthropic, and capability evaluations at METR. Founded an AI evaluation startup in Paris. Building WeaveMind after seeing the same operational failures repeat across production AI teams.